Artificial intelligence has moved from the laboratory into everyday life with surprising speed. Systems that generate text, analyze data or assist decision-making are no longer exceptional, but have become commonplace. Yet these systems remain limited. They are designed for specific tasks, however impressive they may be within that restricted framework.

Beyond this lies what is often called artificial general intelligence, or AGI (Artificial General Intelligence), in other words an AI that approaches the human level.

The term suggests a change in nature rather than degree: not merely more powerful systems, but systems endowed with general capabilities, capable of acting, reasoning and adapting across a variety of contexts. Whether this transition will occur, and how quickly, remains uncertain. What is less uncertain, however, is the direction: toward systems that would no longer be confined to narrow functions.

AGI: a concept without a fixed definition (for now)

Artificial general intelligence is generally understood as a system endowed with the ability to accomplish any cognitive task at least as well as a human being.

Its aim is to bring the cognitive capabilities of machines closer to those of humans, not by limiting itself to the execution of isolated tasks, but by developing an ability to learn, reason and adapt across a wide diversity of domains.

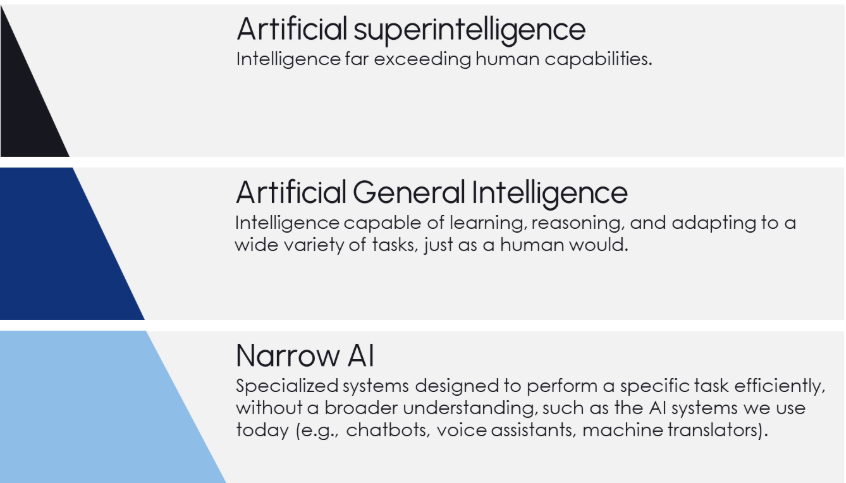

The diagram above presents a description of the levels of artificial intelligence.

This would imply a form of artificial intelligence not tied to a single application. Instead of being trained for one objective alone, these systems could transfer knowledge, respond to unprecedented situations and operate with a broader understanding of context.

At the same time, the concept remains imprecise. There is no consensual definition, and the term evolves at the pace of the technology it seeks to describe. AGI is therefore less a clearly defined objective than a moving horizon, shaped both by progress and by expectations.

A moment of opportunity

From this perspective, AGI is not merely a technical ambition, but a shift in the way technology could be used.

In healthcare, more advanced systems could enable earlier and more accurate diagnoses, support complex therapeutic decisions and accelerate the development of new treatments. In industry, they could foster more adaptive production processes, reduce inefficiencies and improve coordination between systems.

In transportation, advances such as autonomous vehicles suggest a reduction in human error and more responsive forms of mobility. Across all these domains, the potential productivity gains are evident. Routine processes can be automated more effectively, leaving greater room for tasks requiring judgment, creativity and human interaction.

The promise is not limited to efficiency. It also lies in the possibility of addressing problems that have so far resisted conventional approaches. The question of whether this promise can be realized in practice nevertheless remains open.

Risks and considerations

The same developments that make AGI attractive also raise concerns. Recent analyses, notably the work of McLean et al. (2023), referenced in the MIT AI Risk Repository, describe a set of closely intertwined risks.

At the center lies the question of control. Systems endowed with increasing autonomy may no longer remain fully subject to human direction, particularly once deployed at scale. Closely related is the question of objectives. If these are poorly defined, or evolve unexpectedly, the outcomes could diverge from the original intentions.

These concerns are amplified by development conditions. Competitive pressure may favor speed at the expense of caution, increasing the risk of systems that are insufficiently tested or understood. Furthermore, the question of values remains unresolved. Systems operating without a reliable ethical framework may produce outcomes that conflict with widely accepted norms.

Existing governance structures may not be suited to addressing these challenges. Legal and institutional frameworks, designed for earlier technologies, struggle to adapt to systems that are complex, adaptive and difficult to predict.

Beyond these immediate issues remains a more fundamental uncertainty. Highly advanced systems could have consequences extending beyond individual applications. The possibility of broader, even existential, risks cannot be excluded, even if their probability remains debated.

Why governance and responsible AI are essential

For this reason, governance is not a secondary element, but a prerequisite.

As AI systems become more capable, the central question shifts from what they can do to how to keep them under human control. Responsible AI, in this sense, is not merely a matter of principle, but a practical necessity. It implies transparency in how systems operate, clear accountability for their outcomes, and the ability to intervene when necessary.

This goes beyond technical safeguards. Effective governance requires the establishment of development standards, rigorous testing before deployment, and clear limits regarding acceptable uses. It also requires monitoring mechanisms once systems are operational, particularly as they evolve or adapt.

Legal and institutional frameworks play a crucial role, but they also have limitations. Current regulations were not designed for systems capable of learning, adapting and operating with a high degree of autonomy. Updating them, while preserving the flexibility necessary for innovation, constitutes a major challenge.

A broader question of control and distribution also arises. Who develops these systems, who has access to them and who is responsible in the event of failure are not merely technical questions, but also political and societal ones. In the absence of clear answers, the balance between innovation and oversight risks becoming unstable.

Governance must therefore operate at several levels: technical, legal and societal. It must establish safeguards without hindering progress, and provide direction without rigidity. The ability to achieve this balance will play a decisive role in the evolution of AGI.

A trajectory that remains open

AGI is unlikely to appear in the form of a single breakthrough. It is more likely to emerge progressively, through incremental advances and unexpected combinations.

What is already perceptible, however, is a constant movement toward systems that are more flexible, more capable and more deeply integrated into human activities. Whether this process will actually lead to what is today described as “general intelligence” remains to be seen.

What is certain, however, is that the development of artificial intelligence is entering a new phase: a phase in which the distinction between tool and capability becomes less clear, and where the question is no longer only what technology can do, but what role it should play.

Beyond AGI?

At the far end of this trajectory lies a more speculative idea, often referred to as superintelligent artificial intelligence. (cf. level 3 of the diagram)

It would refer to systems surpassing human cognitive capabilities and capable of solving problems that are currently beyond reach. These visions are familiar and are often associated with scenarios in which machines operate at a level no longer comparable to human reasoning.

For now, this remains a theoretical construct. Whether such systems will emerge, and in what form, remains uncertain. It goes beyond current developments and remains an open question.

Preparing your organization for the next generation of AI

In this context of accelerated transformation, organizations can no longer consider artificial intelligence as merely a topic of technological innovation. Its integration now raises strategic, operational and governance issues that require a structured and responsible approach.

Through the Naaia platform, we support companies and institutions in managing AI governance, identifying applicable requirements, mapping AI systems, classifying risks and implementing operational responsible AI governance.